I'll explore this question for today's cloud, and touch upon what the future holds for cloud APIs.

Java? Python? Perl? PHP? Ruby? .NET?

It's tempting to say that the cloud speaks the same programming language whose API you're using. Don't be fooled: it doesn't.

"Wait," you say. "All these languages have Remote Procedure Call (RPC) mechanisms. Doesn't the cloud use them?"

No. The reason why RPCs are not provided for every language is simple: would you want to support a product that needed to understand the RPC mechanism of many languages? Would you want to add support for another RPC mechanism as a new language becomes popular?

No? Neither do cloud providers.

So they use HTTP.

HTTP: It's a Protocol

The cloud speaks HTTP. HTTP is a protocol: it prescribes a specific on-the-wire representation for the traffic. Commands are sent to the cloud and results returned using the internet's most ubiquitous protocol, spoken by every browser and web server, routable by all routers, bridgeable by all bridges, and securable by any number of different methods (HTTP + SSL/TLS being the most popular, a.k.a. HTTPS). RPC mechanisms cannot provide all these benefits.

Cloud APIs all use HTTP under the hood. EC2 actually has two different ways of using HTTP: the SOAP API and the Query API. SOAP uses XML wrappers in the body of the HTTP request and response. The Query API places all the parameters into the URL itself and returns the raw XML in the response.

So, the lingua franca of the cloud is HTTP.

But EC2's use of HTTP to transport the SOAP API and the Query API is not the only way to use HTTP.

HTTP: It's an API

HTTP itself can be used as a rudimentary API. HTTP has methods (GET, PUT, POST, DELETE) and return codes and conventions for passing arguments to the invoked method. While SOAP wraps method calls in XML, and Query APIs wrap method calls in the URL (e.g.

http://ec2.amazonaws.com/?Action=DescribeRegions), HTTP itself can be used to encode those same operations. For example:GET /regions HTTP/1.1

Host: cloud.example.com

Accept: */*Using raw HTTP methods we can model a simple API as follows:

- HTTP GET is used as a "getter" method.

- HTTP PUT and POST are used as "setter" or "constructor" methods.

- HTTP DELETE is used to delete resources.

RESTful APIs are "close to the metal" - they do not require a higher-level object model in order to be usable by servers or clients, because bare HTTP constructs are used.

Unfortunately, EC2's APIs are not RESTful. Amazon was the undisputed leader in bringing cloud to the masses, and its cloud API was built before RESTful principles were popular and well understood.

Why Should the Cloud Speak RESTful HTTP?

Many benefits can be gained by having the cloud speak RESTful HTTP. For example:

- The cloud can be operated directly from the command-line, using curl, without any language libraries needed.

- Operations require less parsing and higher-level modeling because they are represented close to the "native" HTTP layer.

- Cache control, hashing and conditional retrieval, alternate representations of the same resource, etc., can be easily provided via the usual HTTP headers. No special coding is required.

- Anything that can run a web server can be a cloud. Your embedded device can easily advertise itself as a cloud and make its processing power available for use via a lightweight HTTP server.

Where are Cloud API Standards Headed?

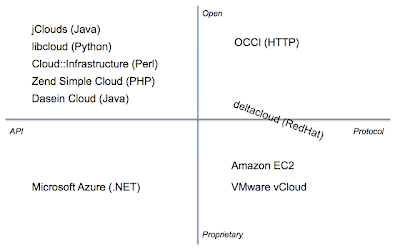

There are many cloud API standardization efforts. Some groups are creating open standards, involving all industry stakeholders and allowing you (the developer) to use them or implement them without fear of infringing on any IP. Some of them are not open, where those guarantees cannot be made. Some are language-specific APIs, and others are HTTP-based APIs (RESTful or not).

The following are some popular cloud APIs:

jClouds

libcloud

Cloud::Infrastructure

Zend Simple Cloud API

Dasein Cloud API

Open Cloud Computing Interface (OCCI)

Microsoft Azure

Amazon EC2

VMware vCloud

deltacloud

Here's how the above products (APIs) compare, based on these criteria:

Open: The specification is available for anyone to implement without licensing IP, and the API was designed in a process open to the public.

Proprietary: The specification is either IP encumbered or the specification was developed without the free involvement of all ecosystem participants (providers, ISVs, SIs, developers, end-users).

API: The standard defines an API requiring a programming language to operate.

Protocol: The standard defines a protocol - HTTP.

This chart shows the following:

- There are many language-specific APIs, most open-source.

- Proprietary standards are the dominant players in the marketplace today.

- OCCI is the only completely open standard defining a protocol.

- Deltacloud was begun by RedHat and is currently open, but its initial development was closed and did not involve players from across the ecosystem (hence its location on the border between Open and Proprietary).

What Does This Mean for the Cloud Developer?

The future of the cloud will have a single protocol that can be used to operate multiple providers. Libraries will still exist for every language, and they will be able to control any standards-compliant cloud. In this world, a RESTful API based on HTTP is a highly attractive option.

I highly recommend taking a look at the work being done in OCCI, an open standard that reflects the needs of the entire ecosystem. It'll be in your future.

Update 27 October 2009:

Further Reading

No mention of cloud APIs would be complete without reference to William Vambenepe's articles on the subject: